Introduction

The new wave in data-warehouse automation isn’t more code-generation tools but AI agents that leverage those tools—and the best-practice logic behind them—to deliver value even faster.

In our previous article we showed how AI accelerates Data Vault 2.0 delivery with beVault. Concepts are useful, but architecture makes them real. This follow-up dives into the real-world architectures that turn the vision into working pipelines.

We’ll compare three patterns, from simplest to most advanced:

- simple API orchestration (state machines)

- multi-tool agentic workflows

- MCP servers for scalable, context-aware agents

Each pattern comes with its own trade-offs in complexity, flexibility and upkeep, helping you pick the right fit for your data-team maturity.

We’ll start with a deceptively simple task—extracting metadata from SQL—and show how AI handles it without brittle parsers.

Three approaches to AI-assisted Data Vault implementation

1. Simple orchestration: Anthropic API + beVault API

The use case

Certain tasks are tedious to do manually and cumbersome even programmatically. Extracting business concepts from text, performing sentiment analysis, or parsing metadata from SQL scripts are repetitive, well-defined operations that don’t require human judgment during execution.

How to achieve this

This approach uses a straightforward state machine: retrieve data, call an AI API (like Anthropic’s Claude) with a structured prompt, receive a formatted response, and update your system via API. No human interaction required, just sequential API orchestration.

Example: Send a SQL script to Claude with a prompt requesting structured metadata (table names, columns, relationships). Receive JSON output. Parse and insert into beVault’s metadata catalog.

Pros

- Quick to implement: Minimal infrastructure, simple linear logic

- Low complexity: No agent frameworks or tool configuration needed

- Predictable: Single-purpose automation with clear inputs and outputs

- Immediate ROI: Automate tedious tasks that were previously manual

Cons

- No adaptability: Fixed workflow with no decision-making capability

- Limited error handling: Malformed responses cause failures

- Single-purpose only: Each task requires separate implementation

- No human interaction: Cannot ask clarifying questions or iterate

When to use: You have repetitive, well-defined tasks with stable formats. You want quick wins without significant investment. Your team is starting their AI journey and needs to build confidence.

[Detailed implementation guide in: “Automating Metadata Extraction with AI: No More SQL Parsers”]

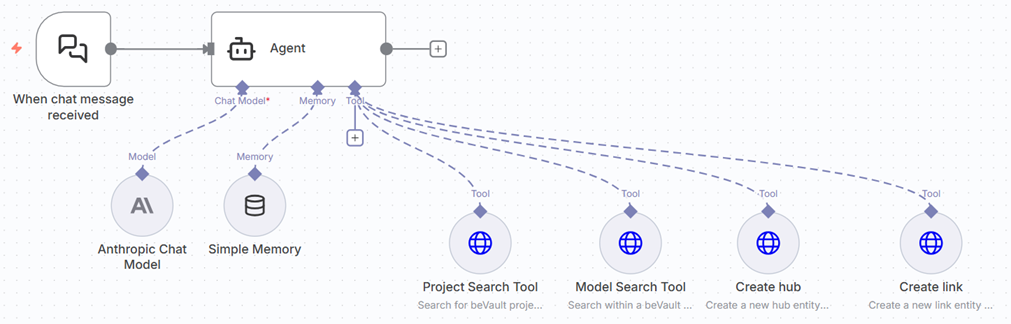

2. Agentic approach: AI agents with direct API integration

The use case

Interactive data modeling requires back-and-forth dialogue, iterative refinement, and technical expertise. Business analysts want to design information marts but lack SQL knowledge. Data engineers spend hours on repetitive model implementation—creating hubs, links, satellites, and mappings through UI clicks. Both scenarios benefit from AI assistance that understands context and can use multiple tools.

How to achieve this

Build AI agents that can reason about goals, select appropriate tools from a set of available APIs, and iterate toward solutions. These agents maintain conversation context, make decisions based on intermediate results, and adjust their approach as needed.

Example use cases:

- Information mart design: Analyst describes needs in plain language. Agent generates production-ready SQL following Data Vault conventions.

- Model implementation: Engineer describes a data model. Agent creates all hubs, links, satellites, and mappings via beVault API—work that takes an hour manually completed in two minutes.

- Source analysis: Team needs to understand a new data source. Agent explores structure, identifies business keys, and suggests model design.

Pros

- Interactive problem-solving: Back-and-forth dialogue for iterative refinement

- Reduced technical barriers: Business users work in natural language

- Dramatic productivity gains: 30× acceleration on repetitive implementation tasks

- Context-aware: Agents understand project specifics and adjust accordingly

- Multi-purpose: Single agent handles varied tasks with appropriate tool selection

Cons

- Complex configuration: Each API endpoint requires detailed tool definitions

- Platform-specific setup: Moving to different agent framework means rebuilding everything

- Higher token usage: More tools and decision points increase LLM costs

- Maintenance burden: API changes require updating multiple tool configurations

- Unpredictability: More autonomy means less deterministic behavior

When to use: You need interactive capabilities. Productivity gains justify configuration effort. Your team has technical capacity for API integration work. You’re comfortable with some platform lock-in while learning what works.

[Complete implementation guide in: “Building Interactive AI Agents for Data Vault: The Direct API Approach”]

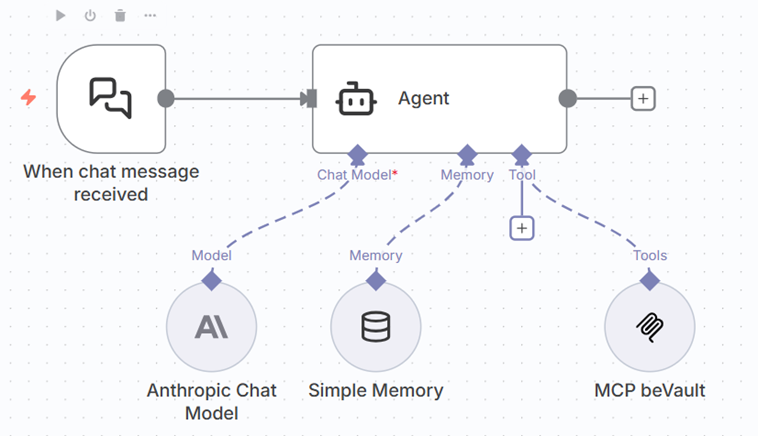

3. AI agents with MCP servers

Understanding MCP

Model Context Protocol (MCP) is an open standard that defines how AI agents interact with external systems. Instead of configuring raw API endpoints as tools, you build an MCP server that acts as an intelligent gateway between agents and your APIs.

The MCP server can encapsulate complex API orchestration internally and expose high-level, business-friendly tools to agents.

The use case

Organizations planning multiple AI agents face a maintenance nightmare with direct API integration. Each agent platform requires separate tool configuration. API changes cascade across every agent. Teams want to experiment with different agent frameworks without rebuilding integrations each time.

How to achieve this

Build an MCP server that wraps your APIs with intelligent orchestration logic. This server exposes simplified, high-level tools that speak business language. Any MCP-compatible agent platform can then connect to your server—Claude Desktop, n8n or custom workflows —without platform-specific configuration.

Pros

- Reduced maintenance cost: Update orchestration once in the MCP server, all agents benefit

- Technology portability: Switch agent platforms without rebuilding integrations

- Lower token usage: Fewer, simpler tools with optimized response to reduce LLM costs

- Encapsulated complexity: API intricacies hidden from agents

- Easier agent creation: High-level tools are clearer and more reliable

- Future-proof: Standards-based approach adapts as technology evolves

- Cost optimization: Simplified tool landscape directly reduces token consumption and API costs

Cons

- Upfront investment: Building an MCP server requires development effort

- Technical capability required: Need expertise to design and maintain the server

- Initial complexity: Understanding MCP protocol and architecture

- Emerging standard: MCP ecosystem is still maturing

When to use: You’re planning multiple agents. Platform independence matters. Long-term maintainability is a priority. You have the technical capability to build the server. You want to minimize operational costs over time.

Current Status: The beVault MCP server is in proof-of-concept, demonstrating significant gains in simplicity, user experience, and cost efficiency.

[Technical architecture and implementation in: “MCP Servers: The Future of Portable AI Agent Architecture”]

Choosing your path forward

The right approach depends on your team’s maturity, technical capacity, and strategic goals:

Start with simple orchestration if:

- You have specific, repetitive tasks to automate

- You want immediate wins with minimal investment

- Your team is new to AI integration

Move to agentic workflows when:

- You need interactive, iterative problem-solving

- Productivity gains justify configuration complexity

- You have technical resources for API integration

Invest in MCP servers when:

- You’re planning multiple agents or want to experiment with platforms

- Long-term maintenance costs concern you

- Token usage and LLM costs are significant

- Platform independence is strategically important

Many organizations will progress through these stages as their AI maturity grows.

What’s next

Next in this series:

- “Automating Metadata Extraction with AI: No More SQL Parsers” – Practical guide to simple orchestration with complete examples

- “Building Interactive AI Agents for Data Vault: The Direct API Approach” – Deep dive into agentic architectures with real implementations

- “MCP Servers: The Future of Portable AI Agent Architecture” – Technical guide with the beVault MCP server as a case study

Start experimenting today: Identify a repetitive task in your workflow. Try simple orchestration for quick wins, or build an agent for interactive work. Learn what fits your team’s capabilities and needs.

The future of Data Vault implementation isn’t about replacing data engineers—it’s about amplifying their capabilities and making data modeling accessible to more people in your organization.