The power of simple AI orchestration

Some tasks are perfect for AI automation: they’re tedious to do manually, require pattern recognition rather than business judgment, and produce structured outputs. Extract key concepts from a document. Perform sentiment analysis on customer feedback. Parse SQL scripts to identify tables and columns.

These tasks share a common pattern: send input to an AI model, receive structured output, use the result. No complex decision-making. No iterative refinement. Just simple orchestration—call an API, process the response, update your system.

This approach is remarkably powerful for its simplicity. Large language models trained on vast amounts of text already understand:

- Business terminology and concepts in documents

- Emotional sentiment in natural language

- SQL syntax, structure, and semantics

What once required custom code and fragile regex patterns now takes a single API call with a well-crafted prompt.

Speed up your documentation process in beVault

For beVault users, information mart documentation is essential but time-consuming. You write SQL code in the “Code” tab that creates views or tables in your database. This SQL is what actually gets executed.

To make your data products self-documenting and maintain proper lineage, beVault provides a “Columns” tab where you document each output column and its lineage, including the transformation applied to the column.

This metadata doesn’t affect execution, it’s purely informational. But it’s incredibly valuable for:

- Data governance: Understanding where each field comes from

- Lineage visualization: Seeing data flow in the Lineage tab

- Self-service analytics: Helping business users understand available data

- Team collaboration: Documenting transformation logic for future maintenance

The opportunity? A complex information mart script with 50 columns can take an hour to document manually. With AI automation, that drops to just 2-3 minutes, a 90%+ time reduction while improving consistency and accuracy.

The solution: Simple AI orchestration that parses your SQL code and automatically extracts metadata in the exact format beVault needs.

The simple orchestration approach

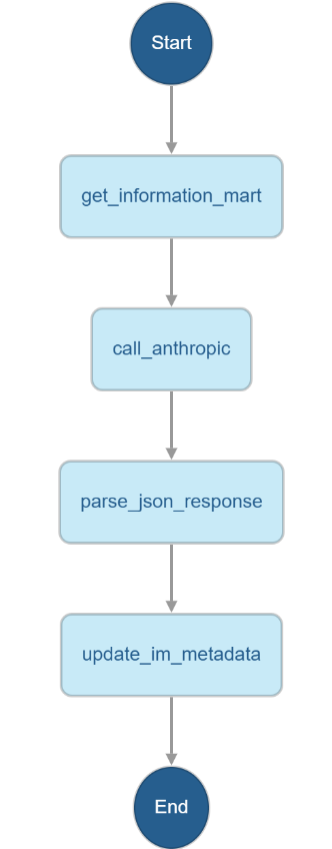

This pattern connects two APIs through a linear state machine:

- Retrieve the information mart script’s SQL from beVault

- Send the SQL to Anthropic Claude with a structured prompt

- Parse the JSON response containing metadata

- Update the beVault columns metadata via API

No complex agent logic. No human interaction during execution. Just automated extraction that transforms your SQL code into structured beVault metadata.

Designing the perfect prompt for beVault

The success of this automation hinges entirely on prompt engineering. Your prompt must guide Claude to produce JSON that maps directly to beVault’s columns structure.

The five critical components

Based on best practices for structured data extraction, your prompt should include:

- Clear role definition: Sets the context and expertise level for the AI.

- Explicit format instruction: Prevents the AI from adding prose that breaks JSON parsing.

- Schema definition: Specifies the exact structure beVault expects with clear field names and types. Alternatively, you can use the structure output feature available on some models, such as Claude.

- Edge case handling: Guides the AI through ambiguous scenarios like complex transformations, CTEs, and nested queries.

- Constraints: Reinforces the output format to ensure clean parsing.

Here’s a simplified version showing the essential pattern:

You are a SQL code analyzer specialized in extracting data lineage metadata from

Information Mart scripts.

Your task is to analyze the provided JSON response containing an Information Mart

script and generate an API call payload to update its metadata.

## Input Analysis

You will receive a JSON object containing:

- A "code" field with SQL DDL/DML statements

- Existing metadata including columns array (which may be incomplete)

- Various IDs and configuration data

## Your Tasks

1. **Technical Description Generation**: If the "technicalDescription" field is empty, generate a concise technical description based on the SQL code analysis.

2. **Table name Generation**: If the "tableName" field is empty, extract the table name or view created by the SQL code

3. **Complete Column Analysis**: Extract ALL columns from the SELECT statement in the SQL code and generate a comprehensive columns array with data lineage information.

## Output Requirements

Generate ONLY a JSON payload that contains ALL the key/value pairs from the input API response EXCEPT "_links", "_embedded" and "code". For the columns and SourceColumns, remove the one that are not used in the "code" anymore. This payload will be used for a POST update call. The structure should be:

```json

{

"name": "existing_name_value",

"id": "existing_id_value",

"businessDescription": "existing_businessDescription_value",

...

}

Column Analysis Rules

Column Identification: Extract each column from the SELECT clause, including:

Direct column references (e.g., info2.contact_name as customer)

Calculated fields (e.g., info.unit_price * info4.quantity as sales_amount_target)

Functions and expressions

...

Critical Output Requirement

RESPOND WITH ONLY THE JSON PAYLOAD. DO NOT INCLUDE ANY EXPLANATORY TEXT, COMMENTS,

OR ADDITIONAL CONTENT.

Important Notes

Preserve ALL existing key/value pairs from the input

...

Analyze the provided JSON thoroughly and generate the complete metadata

update payload that preserves all original data

while completing the missing column information."

For a complete, production-ready prompt that handles all beVault-specific patterns and edge cases, see the beVault documentation:

Complete Prompt Template with Examples

The key is balancing specificity (so Claude knows exactly what to extract) with clarity (so it doesn’t get confused by overly complex instructions).

What this delivers for beVault users

Time savings:

- Manual documentation: 30-60 minutes per script

- AI automation: 2-3 minutes per script

- 90%+ time reduction

Improved data governance:

- Complete lineage visualization in beVault’s Lineage tab

- Consistent metadata format across all scripts

- No missing documentation due to manual oversight

- Transformation logic captured accurately

Better collaboration:

- Business users can understand data products without reading SQL

- New team members can quickly grasp existing scripts

- Code reviews focus on logic, not documentation completeness

Reduced errors:

- No typos in column names or entity references

- No mismatches between SQL code and documentation

- Transformation logic captured exactly as written

Limitations and when this works best

This approach excels when:

- You want quick wins without complex infrastructure

- Occasional validation by humans is acceptable

- Scripts have stable, well-formed SQL

This approach struggles when:

- You want interactive refinement based on business context

- Column names are highly ambiguous without business knowledge

Pro tip: Start with your most standard, straightforward scripts. Build confidence with the automation. Then tackle more complex cases with human review as a safety net.

Moving beyond simple AI automation

Once you’ve automated column documentation, you’ll naturally encounter scenarios requiring more sophistication:

- Interactive script creation: Business users describing needs, AI generating complete SQL

- Model-to-script generation: Automatically creating information mart scripts from Data Vault models

- Optimization suggestions: AI analyzing query patterns and suggesting improvements

This is where agentic workflows shine. The next article in this series—“Building Interactive AI Agents for Data Vault: The Direct API Approach”—explores these interactive capabilities.