From automation to interaction

The previous article showed how simple orchestration automates repetitive tasks like metadata extraction. But what happens when you need back-and-forth dialogue? When requirements emerge through conversation rather than arriving fully formed? When business users want to describe needs in natural language and receive production-ready implementations?

This is where agentic architectures excel. Instead of fixed state machines, you build systems that reason about goals, select appropriate tools, and iterate toward solutions through conversation.

What makes a system “Agentic”?

An agentic system goes beyond executing predefined workflows—it makes decisions based on context and adjusts its approach dynamically. Three core capabilities define it:

- Tool Use: The agent can select from multiple available tools (APIs, functions, databases) depending on what the current situation requires. Instead of following a fixed sequence, it chooses the right tool for each step.

- Decision-Making: After each action, the agent evaluates the result and decides what to do next. Did the API call succeed? Is additional information needed? Should it try a different approach?

- Iterative Problem-Solving: The agent refines its approach through multiple steps until it achieves the objective or reaches a stopping condition. It can handle clarifying questions, adjust based on feedback, and recover from errors.

The key difference: Simple orchestration follows a predetermined path. Agentic systems chart their own course toward a goal.

2.1 Agent for information mart creation: Accelerating repetitive SQL development

The challenge

Business users know what insights they need but lack the SQL skills to query Data Vault models. The gap between “I need customer data with industry and employee count” and production-ready SQL blocks self-service.

Technical users spend hours writing repetitive SQL. Information mart views follow predictable patterns—the same joins between hubs, satellites, and PIT tables, the same snapshot handling, the same column aliasing. This repetitive work is perfect for AI automation.

The solution architecture

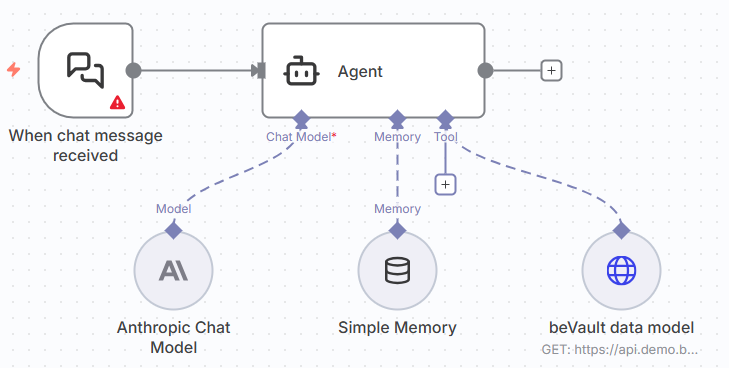

An AI agent bridges both challenges with a simple architecture:

A single tool: To access to beVault’s data model metadata like schema names, table names, column names, data types, business types. In this example, we used an API over the table meta.columns generated by beVault. But you can replace this with direct access to the database or beVault’s API.

System prompt: Contains SQL templates with placeholders for hub names, satellite columns, snapshot names, and business logic.

Here’s the actual system prompt used:

You are a SQL expert here to help business user creating their dimensions in a Data Vault architecture.

You will utilize the specific SQL template provided by the user for creating a dimension based on a hub with a snapshot.

You will replace placeholders in the template with user-specific details to generate a personalized SQL view.

This includes handling the hub name, any number of satellite columns with user-defined SQL functions, snapshot names, and handling multiple satellites by assigning unique aliases.

The output will be a ready-to-use SQL command tailored to the user's PostgreSQL environment, reflecting the intricacies of their Data Vault model and their star schema requirements.

If the user asks for all the columns of the satellite, always remove the columns "hk", "load_dts", "src", "load_end_dts" and "hdiff".

You will find the columns of the data model by using the tool you have. The response has the following structure:

"response_data": [

{

"schema_name": "dv",

"table_name": "h_car",

"name": "hk",

"type": "HASH-KEY",

"data_type": "Guid",

"is_nullable": false,

"expression": null

}

]"

Where:

- schema_name: name of the schema in the database.

- table_name: name of the column in the database.

- name: name of the column

- type: business type of the column based on the data vault methodology

- data_type: type of the column in the database

The columns are to be found in the "dv" schema. The satellites have the following structure name sh_[hub_name]_[satellite_name].

Here is the template for a dimension based on a hub with a specified snapshot and its associated pit table:

"DROP VIEW IF EXISTS im.dim_[hub_name] CASCADE;

CREATE VIEW im.dim_[hub_name] AS

SELECT

pit.bk_bk AS [hub_name]_id

,info.[satellite_column] AS [hub_name]_[satellite_column]

,pit.snapshot_date

FROM bv.h_pit_[hub_name]_[snapshot_name] pit

INNER JOIN dv.sh_[hub_name]_[satellite_name] info ON info.hk = pit.[hub_name]_[satellite_name]_hk AND info.load_dts = pit.[hub_name]_[satellite_name]_load_dts;"

where:

- [hub_name] is the name of the hub.

- [satellite_column] is the column of the satellite that should be included in the dimension. The user can specify as many columns as he wants and apply SQL functions on those columns

- [snapshot_name] is the name of the snapshot

- [satellite_name] is the name of the satellite where the descriptive information is. Data can come from multiple satellites. In that case, you should increase the alias (example: info1, info2, ...)

How it works

User request (business analyst):

“I need a customer dimension using the daily snapshot. Include company name, industry sector, and employee count.”

Agent process:

- Queries metadata: Identifies dv.h_customer, dv.sh_customer_company_info, and bv.h_pit_customer_daily

- Replaces placeholders: hub_name=”customer”, satellite_name=”company_info”, snapshot_name=”daily”

- Generates SQL: Complete view definition with proper joins and column aliases

- Presents for validation: User reviews before deployment

User refinement:

“Add a tier field: ‘Enterprise’ for >1000 employees, ‘Mid-Market’ for 100-1000, ‘SMB’ for <100.”

Agent iteration: Adds CASE statement for tier calculation, regenerates SQL.

What does this solution deliver?

For technical users

- Development accelerates dramatically

- Role shifts from executor to reviewer

- Accuracy improves with consistent metadata encoding and no typos in entity names or references

For the organization

- Time-to-implementation drops from hours to minutes

- Engineering capacity freed for strategic work rather than repetitive configuration

- Quality remains high because the agent applies best practices consistently

The limitations of this approach

While this direct API integration delivers impressive results, it reveals two fundamental architectural challenges:

Agent complexity and tool proliferation: Agents work best with few, clear tools. Add too many and the agent makes mistakes in orchestration: selecting the wrong tool, missing required steps, or executing operations out of sequence. Each additional tool increases decision complexity and token usage, making the agent’s behavior less predictable. Keeping agents simple makes them work better.

Configuration burden and technology lock-in: Configuring such an agent is tedious—every API endpoint must be mapped to a tool with proper parameters, authentication, and error handling. More critically, with new agent-building tools arriving every month, switching platforms means redoing all this configuration work from scratch. The agent’s knowledge is tightly coupled to both the API implementation and the agent platform itself.

Moving beyond direct API integration

The limitations of direct API integration—tool proliferation, configuration burden, and platform lock-in—point toward a more sophisticated approach: MCP servers.

Instead of configuring raw API endpoints as tools in every agent platform, you build a single MCP server that acts as an intelligent gateway. This server:

- Encapsulates complex API orchestration internally

- Exposes high-level, business-friendly tools

- Works with any MCP-compatible agent platform

- Requires configuration only once

The next article in this series—“MCP Servers: The Future of Portable AI Agent Architecture”—explores how this architectural pattern addresses the limitations we’ve identified while preserving all the benefits of agentic workflows.