The challenge with direct API integration

The previous article showed how AI agents with direct API access deliver dramatic productivity gains—30× faster model implementation, natural language interfaces for business users, consistent quality at scale.

But it also revealed fundamental limitations:

Tool proliferation: More tools mean more decisions, higher token usage, and less predictable agent behavior. Agents work best when they’re simple.

Configuration burden: Every API endpoint requires detailed tool definitions—parameters, authentication, error handling. For a system with 10 endpoints, you’re writing and maintaining 10 configurations.

Platform lock-in: New agent frameworks launch constantly. Switching from one platform to another means rebuilding all tool configurations from scratch. Your agent’s knowledge is tightly coupled to both API implementation and platform specifics.

These aren’t minor inconveniences. They’re architectural constraints that limit scalability, portability, and long-term maintainability.

Understanding MCP (Model Context Protocol)

Model Context Protocol (MCP) is a standardized protocol for defining AI agent capabilities through a set of tools and knowledge. In our context, we use it as an API gateway that simplifies orchestration logic and provides business-friendly parameters.

Instead of exposing raw API complexity to the agent, the MCP server handles the technical details internally. Think of it as translating between how humans naturally express requests and how APIs technically require them.

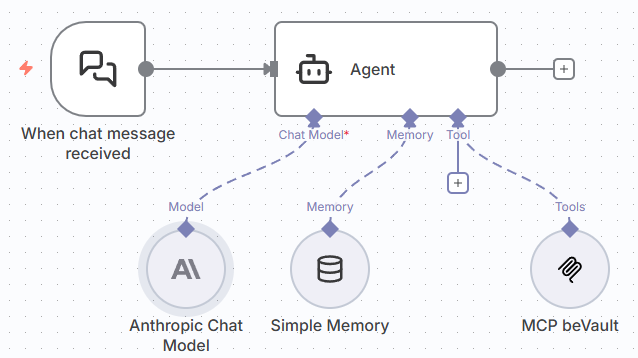

The beVault MCP server architecture

Unlike the direct API approach in Article 3, which required configuring each tool individually in every agent platform, the MCP server provides a dynamic list of tools that agents can discover and use automatically.

The dynamic advantage:

When you add a new tool to the MCP server—for example, a tool to create satellites or manage source mappings—every connected agent immediately gains access to that capability. No need to reconfigure agents, update tool definitions, or restart workflows.

The agent simply queries: “What tools are available?” and receives the current list with descriptions and parameters.

Keeping agents focused:

Most AI platforms allow you to filter which tools an agent can access from the MCP server. This means:

- Create a simple, focused agent that only uses 2-3 specific tools for a narrow task

- Build a comprehensive agent with access to all available tools for complex workflows

- Gradually expand capabilities by adding tools to an agent’s filter as users gain confidence

The critical difference: The MCP server maintains one source of truth for tool definitions. Add a tool once, and it’s available to any agent you choose to grant access to—immediately, without reconfiguration.

Architectural advantages

- Encapsulated complexity: All the orchestration—chaining API calls, resolving technical IDs, handling errors—lives inside the MCP server. The agent speaks pure business language.

- Technology portability: Point any agent platform—n8n, Claude Desktop, LangChain, or whatever comes next—at the same MCP endpoint. No need to rebuild tools or redo config; only the MCP server stays put.

- Centralised maintenance: When the beVault API changes, you update the MCP server once. Every connected agent benefits instantly, no matter where it runs.

- Lower agent complexity: One high-level tool with clear capabilities beats a handful of low-level ones. Fewer decisions, fewer tokens, more predictable behaviour.

- Reduced Token Usage and Cost: By providing tailored tools and optimized responses. Based on our first tests, the MCP server reduced token usage by 90% for the same requests.

Current Status and Roadmap

An MCP server for beVault is coming as an open-source project. This will enable anyone to create simple agents that automate tasks in beVault—combining the robust structure beVault provides with the speed and intelligence of AI.

What’s available now:

- Direct API integration (Article 3) is fully supported and production-ready

- Delivers immediate value while setting the foundation for future MCP adoption

- All architectural patterns work today with existing beVault APIs

What’s coming:

- Open-source MCP server for beVault

- Community-driven development and contributions

- Documentation and deployment guides

- Example agents and best practices

- Lower barrier to entry for AI-assisted Data Vault implementation

Why open source matters:

By releasing the MCP server as open source, we’re enabling the community to:

- Extend functionality for specific use cases

- Contribute improvements and new tools

- Share agent implementations and patterns

- Adapt the architecture to unique requirements

Stay tuned for more information on this topic. Follow beVault’s announcements for release updates, documentation, and community resources.